Recording Test Coverage for Java with Kubernetes

This how-to will show you how to set up test coverage recording for a Java application deployed to a Kubernetes cluster, or any other language running on the Java Virtual Machine (JVM), so you can use Test Gap analysis. This setup works for both automated and manual tests.

Coverage is recorded using the Teamscale Java Profiler, which is a Java profiler that can attach to any JVM to record the lines of code executed during any test (manual or automated). The profiler also handles the automatic upload of the recorded test coverage to Teamscale on shutdown of the JVM or in configurable time intervals.

Prerequisite: Generate git.properties

To upload the coverage to the correct commit in Teamscale, add a git.properties file to your jar/war. Depending on your environment there are multiple options:

Just add the following plugin to your build:

<build>

<plugins>

<plugin>

<groupId>io.github.git-commit-id</groupId>

<artifactId>git-commit-id-maven-plugin</artifactId>

<version>9.0.1</version>

<executions>

<execution>

<id>get-the-git-infos</id>

<goals>

<goal>revision</goal>

</goals>

<phase>initialize</phase>

</execution>

</executions>

<configuration>

<generateGitPropertiesFile>true</generateGitPropertiesFile>

</configuration>

</plugin>

</plugins>

</build>Now build and deploy your application.

Adjust Your Dockerfile

This step is necessary so all remaining coverage is uploaded when your application is shut down.

By default, the agent sends the coverage it collected when the JVM inside your Docker container is shut down. This requires that the JVM receives the SIGTERM signal sent by your orchestration tooling. Thus, you'll need to ensure that the JVM is the main process inside the docker image. This can be achieved by using exec to replace the shell process with your JVM process:

# this will not work:

CMD java -jar /your.jar

# use this instead:

CMD exec java -jar /your.jarThe same applies if you're using ENTRYPOINT instead of CMD:

# this will not work:

ENTRYPOINT java -jar /your.jar

# use this instead:

ENTRYPOINT exec java -jar /your.jarIf your application is wrapped inside a start script, you'll likewise need to ensure that you use exec to replace the shell process with the JVM process.

Set Up a Profiler Configuration

The profiler's configuration can be stored within Teamscale. This makes it easy to change and audit. To configure the profiler for your application, go to the Project Configuration > Coverage Profilers view. Open the dropdown of the New profiler configuration button and click on Create for a JVM (Java, Kotlin, ...) project.

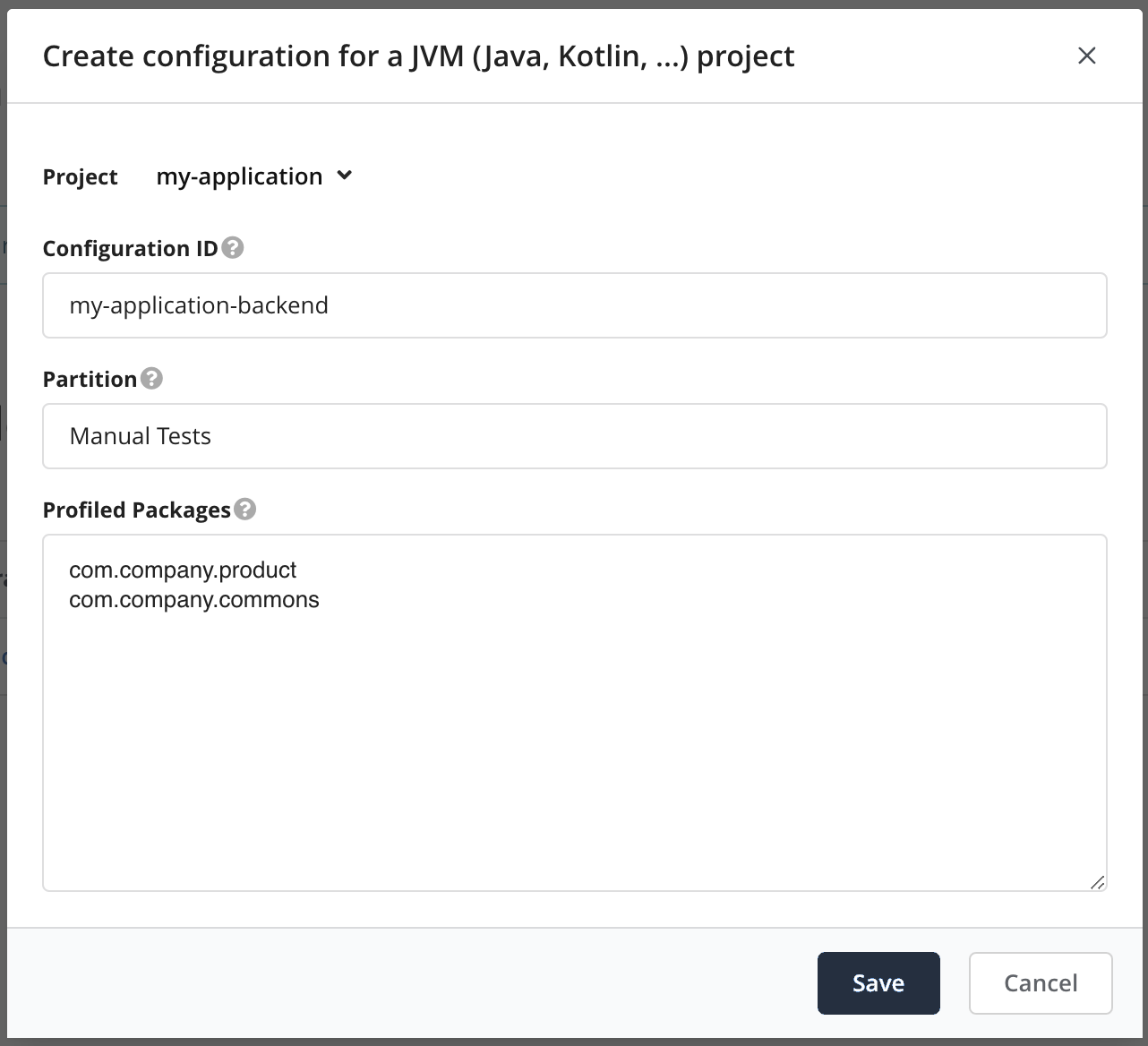

You will be presented with a dialog that lets you generate a profiler configuration for a Teamscale project.

- Select the project that you want to collect coverage for.

- Choose a configuration ID. This ID will be used when starting the profiler so that it knows which configuration to use.

- Select a partition, which is a logical name that groups related coverage, e.g., the type of test that will be profiled (e.g., Manual Test, Unit Test, Regression Test).

- Select all packages that should be profiled. Subpackages are included as well.

Selecting Appropriate Packages

Teamscale automatically suggests packages to profile. Please review them carefully.

Please ensure the packages you select are "future-proof", i.e., they also match packages you might add in the future. This saves you time later, because you won't have to adjust the profiler configuration whenever you add new packages.

Make sure not to profile widespread packages that are also used by third-party software, e.g. net.java or com.

Finally, click Save.

The generated profiler configuration looks similar to the following:

includes=*com.company.product.*;*com.example.commons.*

teamscale-project=my-application

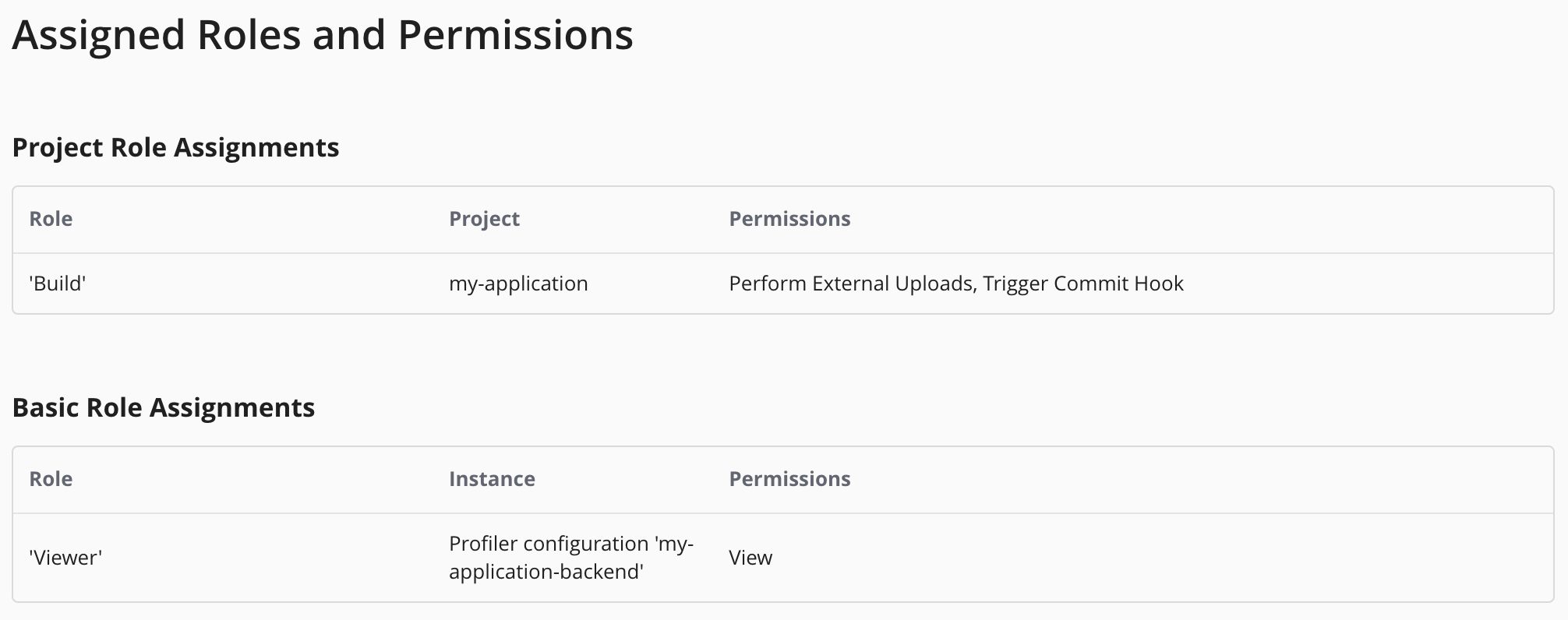

teamscale-partition=Manual TestsNext, please go back to the Project Configuration > Coverage Profilers overview. Click on the button next to the profiler configuration that you just created and assign Viewer permissions to the technical user that the profiler uses to connect to Teamscale.

Next, please go to Admin > Users and select the technical user whose credentials should be used for the coverage upload. Ensure that the user has the Perform External Uploads permission for the project. You can achieve this by assigning the pre-defined Build project role to the user.

Then generate an access key by clicking on Generate New Access Key and save it. We will need it in the next step.

Deploy the Profiler

To deploy the profiler to a pod, you need to create a volume to hold the profiler files, mount it into each container that should be profiled and activate the profiler with a few environment variables. Here is a simple example deployment YAML file with the necessary modifications:

apiVersion: apps/v1

kind: Deployment

metadata:

name: backend

labels:

app: backend

spec:

selector:

matchLabels:

app: backend

template:

metadata:

labels:

app: backend

spec:

volumes:

# add a volume that will hold the profiler files

# it can be shared between all containers in this pod

- name: teamscale-java-profiler

emptyDir: {}

initContainers:

# add our init container to copy the profiler to the `teamscale-java-profiler` volume

- name: prepare-profiler

image: cqse/teamscale-java-profiler:latest

volumeMounts:

- name: teamscale-java-profiler

mountPath: /transfer

containers:

- name: backend

image: backend:latest

volumeMounts:

# mount the profiler

- name: teamscale-java-profiler

mountPath: /teamscale-java-profiler

env:

# enable the profiler

# replace with your Teamscale instance's URL and username

- name: JAVA_TOOL_OPTIONS

value: "-javaagent:/teamscale-java-profiler/teamscale-jacoco-agent.jar=teamscale-server-url=https://your.teamscale.url,teamscale-user=your-build-user-name"

# set the access token from a secret

- name: TEAMSCALE_ACCESS_TOKEN

valueFrom:

secretKeyRef:

name: teamscale

key: access-token

# set the profiler configuration ID from the previous step

- name: TEAMSCALE_JAVA_PROFILER_CONFIG_ID

value: "my-application-backend"

# set an appropriate termination grace period so the profiler has

# a chance to upload coverage. See below.

terminationGracePeriodSeconds: 180We recommend storing the access token in a secret, e.g:

apiVersion: v1

kind: Secret

metadata:

name: teamscale

data:

access-token: BASE64_ENCODED_ACCESS_TOKENIstio: Additional Steps Required

If you are using Istio in your cluster, you must set holdApplicationUntilProxyStarts to ensure the Istio network is fully set up before your application container is started. Otherwise, you may experience connection failures when the Teamscale Java Profiler tries to retrieve its configuration from Teamscale, leading to missing coverage.

Debugging Setup Problems

The profiler by default logs to /tmp/teamscale-java-profiler-<PID>-<random string>/logs/ inside your Docker container. If the setup does not work as expected, please have a look at the problems reported in this log file.

You can also make the profiler log debug information to stdout by appending ,debug=true to the JAVA_TOOL_OPTIONS.

Set an Appropriate Grace Period

When the pod running your application and our profiler is stopped, the profiler will upload all collected coverage to Teamscale. This adds some seconds to your application's shutdown time. By default, Kubernetes will kill any pod that takes longer than 30s to shut down. This will interrupt the profiler and the coverage data will not be uploaded and is lost.

Thus, you must set an appropriate terminationGracePeriodSeconds value that is large enough so your application can shut down cleanly and the profiler has a chance to send its coverage data to Teamscale. What "appropriate" means depends on your application. We recommend you run a few experiments to determine how long your application takes to shut down cleanly with and without our profiler enabled and then add some buffer on top of the largest measured value. 3 minutes is a good starting point that usually works even for large applications.