Glossary

You may stumble upon some unfamiliar terms in Teamscale documentation. This glossary lists the common terms used throughout the documentation.

Access Key

The access key is used to authentication with Teamscale's REST API, e.g., when accessing Teamscale from our IDE plugins or scripts. Teamscale requires this key, instead of the password, to avoid that user passwords are stored and sent by tools communicating through the REST API, and to prevent that scripts break when passwords change (e.g., due to company policies). Every Teamscale user can generate such a key on their personal profile page <TEAMSCALE_URL>/user/access-key, also accessible via the user icon in the upper right corner.

Analysis Group

An analysis group is an aspect of a quality indicator that exposes options for passing data in order to influence analysis of code in Teamscale. The options may be of metrics or running of custom checks.

Analysis Profile

An analysis profile comprises a set of analyses and their configurations. For each language, there is a default analysis profile, but Teamscale users can also configure their individual profiles tailored to suit their needs.

Ant Pattern

A pattern, as used by Ant and other tools, to match one or more paths. A question mark (?) matches a single character within a path component but not the path separator. Likewise, a single asterisk (*) matches zero or more characters within a path component but not the path separator. In contrast, a double asterisk (**) matches zero or more characters including the path separator. Examples of Ant patterns are **/*.java or include/*.?. In Teamscale, Ant patterns are matched case-insensitively unless otherwise noted.

Assessment Metric

An assessment metric is a metric which divides the system into parts belonging to the green assessment, the yellow and the red one. Example: The metric File Size is an assessment metric. Green files are, for example, those with less than 300 SLOC, yellow are those with less than 750 SLOC and the remaining ones are red.

Baseline

A baseline is a point in time defined by the user. A baseline is often used as reference point to compare the current quality status against, for example in the delta analysis. In Teamscale, a baseline is defined with a calendar date and a corresponding label. It is thereby independent of the underlying branch in case branch support is enabled.

Branch and Timestamp

As Teamscale supports several version control systems, it allows to use a VCS agnostic system to point to a specific code commit. The format of this system is branchName:timestamp. The timestamp is either the elapsed milliseconds since epoch (e.g. 1576093049000 = Wed Dec 11 2019 19:37:29 UTC) or "HEAD". HEAD points to the latest commit.

The timestamp can be seen in the URL of the commit in Teamscale. It is the value of the query parameter t e.g. t=release%2F4.2.3%3A1576093049000 in URL encoded format can be decoded to t=release/4.2.3:1576093049000 which gives the Branch and Timestamp of release/4.2.3:1576093049000

Examples:

master:HEAD: The most recent commit known to Teamscale in the master branch.my-branch:1576093049000: Branch and timestamp.

Branch Support

If enabled, the branch support feature allows Teamscale to not only analyze the »master« or »trunk« of a repository, but also its branches.

Change Count

This metric counts how many commits every source code file changed over a particular time interval (e.g., from one commit to another, or for the whole history computed in Teamscale). The Change Count metric is applied to every file modified in the computed history, and can be aggregated hierarchically by source code folder all the way up to the project root. Note this metric depends on which branch you look, since a merge commit from one branch to another will count only once, rather than adding up all commits that possibly changed a given file before merge on the source branch. Also, file addition and deletion do not count.

Clone Coverage

The clone coverage indicates the percentage of code lines which are covered by at least one clone. It can be interpreted as the probability that a random (evenly distributed) change to a code line needs to be propagated to at least one other clone instance.

Component

In an architecture specification, a system is modeled as a hierarchy of components. A component has a unique name and may have sub-components and mappings.

Custom Artifact Metric

A metric that is attached to custom artifacts, e.g., the build status of your system. Teamscale offers a flexible mechanism to integrate show such custom metrics.

Custom Check

A custom check is an analysis implemented by the user which can be integrated in Teamscale. Teamscale also comes with a large set of pre-written checks.

Cyclomatic Complexity

This metric is also known as the »McCabe metric«. It measures the number of linearly independent paths through a program's source code. It is useful for establishing the number of test cases needed to cover the control flow of the method completely, but is not a good predictor for method complexity. The implementation used by Teamscale employs the definition given in McCabe's original paper.

Cross-Annotation

Cross-annotation allows you to include coverage from other Teamscale projects when analyzing test gaps. A method is considered covered if it's covered in any of the current project's selected partitions or in a selected cross-annotation project (across any branch/partition), provided the method's code is identical. This is particularly useful in scenarios where development and testing are separated across different systems, such as SAP systems with separate dev/test/prod instances where coverage is only collected in the test environment.

Delta

The delta between two snapshots contains the changes from the repository between a start date and an end date as well as differences in the quality status. It also contains the differences in metric values and assessments as well as the finding churn, for example. Often, the start date is referred to as baseline for the delta.

Delta Analysis

The delta analysis computes the delta.

Dialog

A dialog is a UI element of the Teamscale web interface. It pops up dynamically when the user performs certain tasks and closes when the user has completed the respective task. The user has to press a button to close a dialog.

Excluded Finding

An excluded finding is either tolerated or marked as false-positive.

External Finding

An external finding is a finding created externally by other tools and uploaded to Teamscale. Each upload of external findings will show as an external upload commit in Teamscale.

External Metric

In contrast to metrics created internally by Teamscale, an external metric can be a code metric or any custom artifact metric externally created and uploaded to Teamscale. Each upload of external metrics will show as an external upload commit in Teamscale.

External Upload Commit

These commits are not present in the version control system of the project, but artificially added to Teamscale. They provide data from external analysis tools, such as test coverage tools, and build scripts, for example.

False Positive Finding

A false positive finding denotes a finding which has been incorrectly detected by the analysis, i.e., the static analysis produced a finding even though there is no violation present. To mark a finding as false positive means that this finding will be put in the false positive findings section and not shown directly anymore. In the Code Detail View or in the IDE integration, it will be not shown at all. It can, however, still be inspected in the False Positive View.

File Dependency

In a file-based architecture, the path to the source code files are used for dependencies.

Finding

A finding refers to a specific region in the code, that is likely to hamper software quality. The region in the code is referred to as finding location. Generally, a finding belongs to one finding group. A finding can occur when a metric and its corresponding threshold are violated, for example, when a file exceeds the size limit of 300 SLOC. Then this overly long file becomes a finding. Alternatively, a finding can directly result from a quality analysis. The code clone detection, for example, reveals clones as findings. These clones do not result from a threshold violation of the corresponding clone coverage metric, but are findings themselves. Each finding has an introduction date in Teamscale.

Findings Badge

Teamscale can add findings badges when voting on merge requests. They represent an easy to comprehend visualization of the findings churn between two commits (in this case, the merge source and target).

A findings badge can be read as follows:

- Red: Findings which have been added in this churn.

- Blue: Old findings in code, which has been changed in this churn.

- Green: Resolved findings in this churn.

Finding Description

A finding description provides information about the nature of the finding and its impact on quality. For external findings, finding descriptions are used to associate findings with their findings group.

Finding Group

A finding group describes a single analysis or a group of analyses creating findings. For example, there are three different finding groups »File Size«, »Method Length« and »Nesting Depth« which represent one analysis each. The finding group »Comment quality« comprises several analyses, detecting unrelated member comments or empty interface comments, for example. A finding group is always associated with one finding category.

Finding Category

A finding category combines several finding groups. For example, the finding category »Structure« contains the three groups »File Size«, »Method Length« and »Nesting Depth«. A finding category represents a quality indicator.

Finding Location

The location can span several files (e.g., a code clone), a single file (e.g., an overly long file), a method (e.g., an unused method) or a single line (e.g., a naming convention violation).

Findings Count

This metric denotes the number of all findings for a project. Naturally, it depends on which analyses are configured.

Findings Churn

A finding churn indicates for a given time internal, how many findings were added to or removed from the system.

Impacted Test

An Impacted Test for a given set of changes is a test that executes some of the changed methods.

Introduction Date

The introduction date refers to the revision in which a finding was first detected by Teamscale. For internal analysis, this refers to the revision with which the developer introduced the finding in the code base. For external analysis, which are integrated via a nightly build, this refers to the revision with which the finding was first uploaded to Teamscale. Hence, the introduction date then refers to the first detection time, not the actual introduction time.

Issue Metric

An issue metric is the numeric result of an issue query which can be saved in Teamscale and, thus, be used continuously.

Java timestamp / Unix Timestamp

The Unix epoch time (time since January 1, 1970 00:00:00.000 GMT) in milliseconds.

Line Coverage

Line coverage records which source code lines were executed during a test run. Each line is reported as covered or uncovered. This provides fine-grained visibility into code execution but requires instrumentation that typically has a higher performance impact than method coverage.

Lines of Code

Abbreviated as LOC, this metric counts all lines of code of a file as displayed to the developer. The count includes empty lines and comments. See also Source Lines of Code.

Mapping

Mappings between code and architecture define which implementation artifacts belong to which component in the architecture definition. If the architecture is based on file dependencies, a mapping specifies which files belong a component. For type dependencies, it specifies which types map to a component.

Mapping File

Contain mappings from the information in a coverage file to the original source code lines, e.g., line number translation tables or mappings from method IDs to source code lines.

Method Coverage

Method coverage records which methods were called during a test run. Each method is reported as covered or uncovered, regardless of which lines within it were executed. Method coverage has lower performance overhead than line coverage and is sufficient for Test Gap Analysis, which operates at method granularity.

Method Length

This metric is an assessment metric and denotes the distribution of code in short (green), long (yellow) and very long (red) methods. The length of each method category is configured by a threshold in the analysis profile.

Metric

A metric captures an automatically measurable aspect of software quality. In can either be a numeric metric or an assessment metric.

Nesting Depth

This metric is an assessment metric and denotes the distribution of code over methods with shallow (green), deep (yellow) and very deep (red) nesting. Each nesting category is configured by a threshold in the analysis profile.

Numeric Metric

A numeric metric is a metric that consists of a single numeric value. Example: The clone coverage is an example of a numeric metric. Its value could be 20%. Another example is the metric number of files. Its value could be 4000.

Orphan

If a file or a type are not mapped to any component in an architecture specification, they are marked as orphan.

Page

A page is a UI element of Teamscale’s web interface. If a perspective has a sidebar on the right hand side, then each entry in this sidebar represents a page.

Partition

A partition is a logical group of external analysis data, identified by a label (an arbitrary string that should be descriptive of the group). Whenever you upload external data, such as code coverage or analysis findings, to Teamscale, you must specify a partition. The partition is implicitly created by the first upload to it. Subsequent uploads to a partition will overwrite all data previously uploaded to the same partition.

For example, if you collect code coverage of both your unit tests and your automated UI tests, you would upload the coverage from either test stage to a distinct partition, say Unit Tests and UI Tests. Teamscale will then consider the union of both partitions, i.e., a line in your source code is then counted as covered, if it is either covered by the unit or by the UI tests or both.

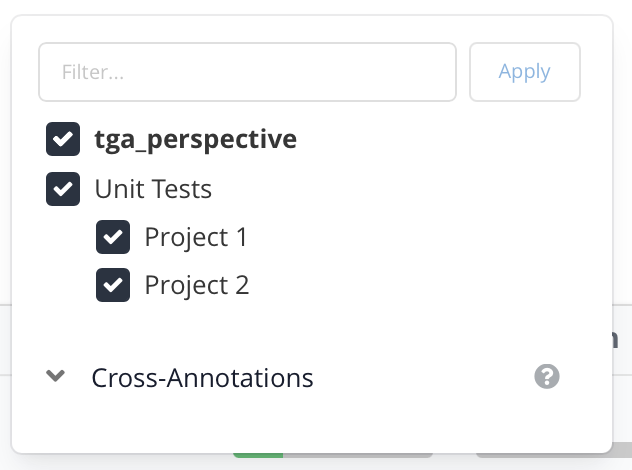

Partition Grouping

The character > can be used in partition names to define a hierarchy, which is rendered in a tree-like format e.g. in the Test Gap Overview and makes it easy to view coverage data for select partitions. For example, Unit Tests > Project 1, Unit Tests > Project 2.

Perspective

A perspective is the main UI element in Teamscale. Toplevel, Teamscale consists of several different perspectives which can be navigated in the top header row of the web UI.

Policy

A policy in an architecture specification determines which components may be in a relationship (allow, deny, tolerate) with each other.

Pre-Commit Analysis

Pre-commit analysis allows a Teamscale client like an IDE plug-in to submit source code for analysis to the Teamscale server even though the code hasn't been committed to a version control system yet. The server then analyzes the code changes on-the-fly and informs the client about any change to the number of findings that would occur were the code changes actually committed.

Quality Report

A quality report is a document reflecting the overall quality of a project and the trends in project quality since a baseline. A quality report may contain findings from project code, metrics, tasks and other aspects of project quality. A Teamscale-generated quality report is a collection of slides, each reflecting a certain aspect of project quality.

Quality Indicator

A quality indicator denotes a certain quality aspect. For example, »Structure« or »Code Duplication« represent quality indicators.

Quality Goal

A quality goal represents the target line for the quality status of a system. It can have one of the following four values: perfect (no findings at all), improving (no new findings and no findings in modified code), preserving (no new findings) or none (any number of findings).

Requirements Tracing

Requirements Tracing is a Teamscale analysis that helps you establish a traceable connection between the spec items in your requirements management system, the code entities (classes, methods, etc.) that implement specific items, and the tests that ensure the spec items have been correctly implemented. The building blocks of the analysis are the Requirement Management Tool connectors, source code comment analysis and static test case extraction.

Revision

A revision uniquely identifies a commit, i.e., a change to one or more files, in a version control system. As an example, in Git, commits are identified by their SHA-1 Hash. Instead of using the whole hash value, a unique prefix is enough to identify the commit.

Rollback

If Teamscale detects an inconsistency or change in the analyzed data, it will automatically trigger a rollback to the date of the change. This means that Teamscale will reanalyze all the commits that are affected by the change, to ensure that all the changes are propagated through the commit history. A rollback typically occurs when the commit structure has changed (e.g. due to git rebase) or external reports have been uploaded.

Section

A section is a part of a view that is independent of the other content of that view, e.g., one of the main tables of the Metrics perspective.

Severity

The severity of a finding is expressed by either a yellow or a red color. It can be configured in the analysis profile and used to filter findings.

Size Metric

A size metric indicates the size of a system. It can either count the number of files, lines of code, or source lines of code.

Shadow Instance

A second instance of Teamscale that is run in parallel to your production instance during feature version updates. This allows your users to still access the old version of Teamscale while the new one is still analyzing.

Shadow Mode

In this mode, Teamscale will not publish data to external systems (e.g., via notifications or merge-request annotations) and will not fetch data from SAP systems. See also our admin documentation.

Source Lines of Code

Abbreviated as SLOC, this metric counts the total lines of code of a file as displayed to the developer, and subtracts blank lines and comment lines.

Specification Item

A specification item (or spec item) in Teamscale is a unit of work managed in a requirements management system. Examples of spec items include system/software requirement items, parent component items, test case items, etc.

Task

A task in Teamscale is a concept to schedule findings for removal and keep track of the process. Multiple findings can be grouped together to one task.

Test Execution

A test execution is a single data point for a test that was executed. It includes which result the test produced (passed, failed, skipped, etc.), how long it took to execute and optionally an error message.

Test Gap

A Test Gap is a method in the source code of your software system whose behavior was changed and that has not been executed in tests since that change. Let's look at the individual parts of this definition:

- A Test Gap is in your source code. TGA cannot identify changes to the behavior of your software system that are caused by changes in configuration or data, because we cannot generally determine which parts of a system are effected by such changes.

- A Test Gap contains a behavioral change. To identify such changes, Teamscale analyses the change history in your source code repository. Moreover, it applies a refactoring detection, to filter changes that are not behavioral, such as changes to code comments or renaming of variables, methods, classes or packages.

- A Test Gap has not been executed in any test. Therefore, you cannot have found any defect hidden in a Test Gap in your testing. Note that the inverse does not hold: Code changes that have been executed in one or multiple tests may still contain defects. As with any testing approach, TGA cannot guarantee the absence of defects.

Test Gap Analysis

The Test Gap Analysis (TGA) can detect holes in the test process by uncovering code that was not tested after its latest modification. The TGA can combine test coverage from different origins (e.g., manual tests and unit tests). The start date after which code modifications are considered is called ”Baseline” and is an important parameter of each TGA inspection.

Test Gap Badge

The Test Gap Badge is the visual representation of test gap data which is often added to merge requests by Teamscale.

The Test Gap badge can be read as follows:

- Ratio: The Test Gap Ratio.

- Green: Number of changed and added methods which have been tested.

- Yellow: Number of changed methods which have not been tested.

- Red: Number of added methods which have not been tested.

Test Gap Ratio

This metric denotes the number of modified or new methods not covered by tests divided by the total number of modified or new methods.

Test Impact Analysis

The Test Impact Analysis (TIA) can select and prioritize regression tests given a concrete changeset. The TIA uses Testwise Coverage for the test selection. Test covering the given changes are called impacted tests.

Testwise Coverage

Testwise Coverage is the name of a report format based on JSON. It contains a list of tests contained in the set of all available tests, as well as optional coverage per test and execution results of the test.

Threshold

A threshold can be used to derive findings from metrics. For example, the metric »File Size« has two thresholds, set to 300 (yellow) and 750 (red) as default. Hence, files which are longer than 300 SLOC, become a yellow finding. Files longer than 750 SLOC become a red finding.

Threshold Configuration

A threshold configuration comprises a set of thresholds for the analyses.

Timetravel

The timetravel is a feature of Teamscale which makes it possible to show all of Teamscale’s content in a historized form, i.e., to display all information at any point in time of the project’s history.

Tolerated Finding

A tolerated finding denotes a finding which is technically correct, but has been tolerated as it cannot or should not be fixed, e.g., because the detected issue is not relevant or its resolution requires unjustifiably high efforts. To tolerate a finding means that this finding will be put in the tolerated findings section and not shown directly anymore. In the Code Detail View or in the IDE integration, it will be not shown at all. It can, however, still be inspected in the Tolerated Findings View.

Treemap

Treemaps visualize individual metrics in relation to the source code structure and file sizes. Therefore, directories or source code files of the analyzed system are drawn as rectangles. The area of the rectangle corresponds to the number of lines contained in the file (or for directories all the files included in the directory). The position of the rectangle follows the directory structure, i.e., rectangles for files in the same directory are drawn next to each other. The shading of the rectangles indicates the hierarchical nesting of the files and directories.

Trend

A trend indicates the evolution of a metric over time. A trend can be calculated for both a numeric metric and an assessment metric. In this first case, the trend is just a simple function in the mathematical sense. In the second case, the trend chart indicates three values per time: the green, the yellow, and the red value and shows how the system distribution over these three categories evolves. If branch support is disabled, the history of the system and, hence, a metric trend, is linear by default. If branch support is enabled, however, the history of a branch is not necessarily linear. To display a linear trend, the »first-parent« strategy is used to pick one parent for each commit.

Type Dependency

In a type-based architecture, the full-qualified names of all types (classes, structs, enums etc.) in the codebase are used for dependencies.

Uniform Path

File-path-like string that represents a hierarchy. These paths are used to represent all hierarchically structured data in Teamscale e.g. source file locations or test names. Parts of the hierarchy are delimited by forward slashes.

Example: com/example/MyTest/testSomething.

View

A view is a UI element of Teamscale’s web interface. In contrast to pages, it cannot be references by the sidebar but appears and disappears as the user navigates and performs certain tasks.

Violation

If a dependency contradicts a modeled policy, it will be treated as an architecture violation.

Voting

For many code collaboration platforms (e.g., GitHub, GitLab, Bitbucket), Teamscale supports voting. The semantics are slightly different depending on the platform, but in general this is the process of adding a vote to a merge request. This can be a "thumbs up", "+1", or simply a passing build result. In addition to this, Teamscale can also integrate information such as the number of findings, relevant test gaps or similar to the merge requests, this is usually done by enhancing the merge request's description. Last but not least, Teamscale may also add detailed line comments to merge requests, which show relevant findings at the relevant code lines.